Does a compact retriever for late interactions index only once to deliver fast cross-lingual inference and accurate results? Liquid AI release LFM2-ColBERT-350MThe compact retriever for late interaction is suited to multi- and crosslingual search. The system can retrieve documents with high accuracy, even if they are indexed and written in multiple languages. Liquid AI’s team reported inference speed that was on par or faster than models which were 2.3x smaller. This is attributable to the LFM2 core. It comes complete with Hugging Face demonstration and detailed model cards for integration into retrieval enhanced generation systems.

Why late interactions are important?

The majority of production systems use either bi-encoders to increase speed, or cross encoders to improve accuracy. The late interaction is designed to achieve both benefits. Tokens are used to encode documents and queries separately. The system uses operations like MaxSim to compare token vectors during query times. It preserves the fine-grained interactions between tokens without incurring all of the costs associated with joint cross attention. This allows for pre-computations of documents, which improves the precision when ranking. In one single pass, it can be used as both a retriever and a ranking tool.

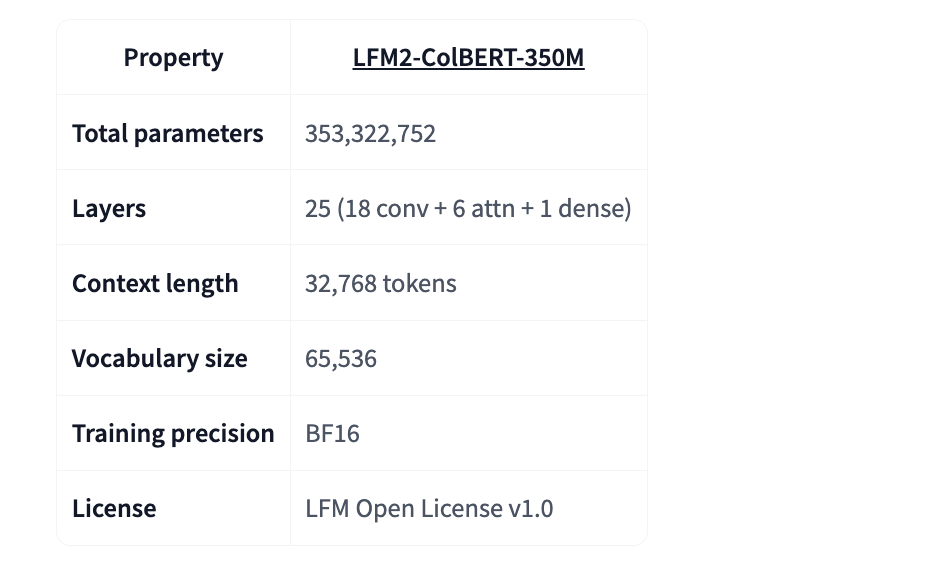

Model specification

There are 350 million total parameter values in LFM2-ColBERT. The 25 layers consist of 18 convolution blocks, 6 attention blocking blocks and 1 dense layer. The length of the context is 32k tokens. The size of the vocabulary is 65 536. MaxSim is the similarity function. Output dimension is 128. Precision of training is BF16. License: LFM Open License, Version 1.

Supported and evaluated languages

This model is available in 8 different languages. This includes English, Arabic and Chinese. Also included are Japanese, Korean, German, French, Japanese and Spanish. Italian and Portuguese have been added, making the total of 9 languages available for comparisons between document languages and query languages. It is important to make this distinction when deploying systems that are required to cover specific markets.

Setting up the evaluation and key findings

Liquid AI has extended the NanoBEIR Benchmark with Japanese and Korean. The extension is published for reproducibility. LFM2-ColBERT 350M is more multilingual than GTE-ModernColBERT v1 with 150M parameters. German, Arabic. Korean and Japanese performance gains are the most significant.

The Key Takeaways

- MaxSim’s token-level scoring preserves the finer interactions, while maintaining separate encoders. Document embeddings are precomputed with MaxSim and can then be queried quickly.

- It is possible to index documents in multiple languages and retrieve them in any of those. Model cards list 8 languages supported, whereas evaluations cover 9 languages when evaluating cross-lingual pair.

- On the NanoBEIR multilingual extension, LFM2-ColBERT-350M outperforms the prior late-interaction baseline (GTE-ModernColBERT-v1 at 150M) and maintains English performance.

- Inference speed is reported on par with models 2.3× smaller across batch sizes, attributed to the LFM2 backbone.

Editor’s Notes

Liquid AI’s LFM2-ColBERT 350M uses late interaction with MaxSim. It encodes questions and documents separately. Then, at the time of query, scores token vectors, preserving token level interactions. The system is designed to target multilingual and cross-lingual retrieval. It indexes once, and queries in multiple languages. Evaluations are described using a NanoBEIR extension. Inference speed is comparable to models that are 2.3 times larger, according to Liquid AI’s team. This can be attributed in part by the LFM2 core. The late interaction of the nanoscale is ready to go into production for RAG trials in multilingual languages.

Take a look at the Model Weights, Demo You can also find out more about the following: Technical details. Check out our GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter Don’t forget about our 100k+ ML SubReddit Subscribe Now our Newsletter. Wait! What? now you can join us on telegram as well.

Asif Razzaq serves as the CEO at Marktechpost Media Inc. As an entrepreneur, Asif has a passion for harnessing Artificial Intelligence to benefit society. Marktechpost is his latest venture, a media platform that focuses on Artificial Intelligence. It is known for providing in-depth news coverage about machine learning, deep learning, and other topics. The content is technically accurate and easy to understand by an audience of all backgrounds. Over 2 million views per month are a testament to the platform’s popularity.