These tasks require models of artificial intelligence to use both perception and logic reasoning in order to understand and process visual data. They can be used for a range of tasks, from medical diagnosis to symbolic puzzles or image-based answer questions. Success in this field requires more than object recognition—it demands dynamic adaptation, abstraction, and contextual inference. The models must be able to analyze the images and identify features. They also need to generate solutions or explanations that are tied directly to visual input.

When models are asked to modify or apply their reasoning strategies in order to perform different tasks, they become limited. Most current models are rigid, relying on hardcoded routines or pattern matching. They struggle to solve problems that are unfamiliar or find solutions outside of their pre-programmed toolkits. These systems also struggle when tasks require abstract reasoning, or models that look past surface features of visual content. It has become increasingly difficult to find a system capable of autonomously adapting and constructing new reasoning tools.

Previous models tended to rely on rigid one-turn processing, fixed toolkits, and fixed workflows. Visual ChatGPT or ViperGPT are solutions that integrate segmentation and detection models. However, they have predefined workflows. The limitations of this setup limit creativity and flexibility. They are unable to change or add tools during the course of a job. The models are linear in their processing, which reduces the usefulness of these models for domains requiring iterative thinking. The models lack or are severely restricted in their multi-turn abilities, which makes it impossible to engage in more complex analytical reasoning.

PyVision is a tool developed to solve these problems. This framework was developed by teams at the Shanghai AI Lab and Rice University. It also includes NUS and SII. PyVision does not rely on static modules, as was the case with previous approaches. PyVision uses Python for its main language, and creates tools in an interactive loop. The model can then adapt mid-task to the task at hand, making decisions and refining its reasoning or code.

PyVision is initiated by a visual query sent from a user. The MLLM generates Python codes based on prompts, like GPT-4.1 and Claude-4.0. The results—textual, visual, or numerical—are fed back into the model. The model is able to revise the plan and generate new code using this feedback. Iterations can be performed until a solution has been found. This system is able to maintain variable states across interactions. It allows for sequential reasoning. PyVision is equipped with internal safety measures, including process isolation and structured input/output, to ensure that it can perform well even in the face of complex reasoning. The Python libraries OpenCV, NumPy and Pillow are used to carry out operations such as segmentation, OCR and image enhancement.

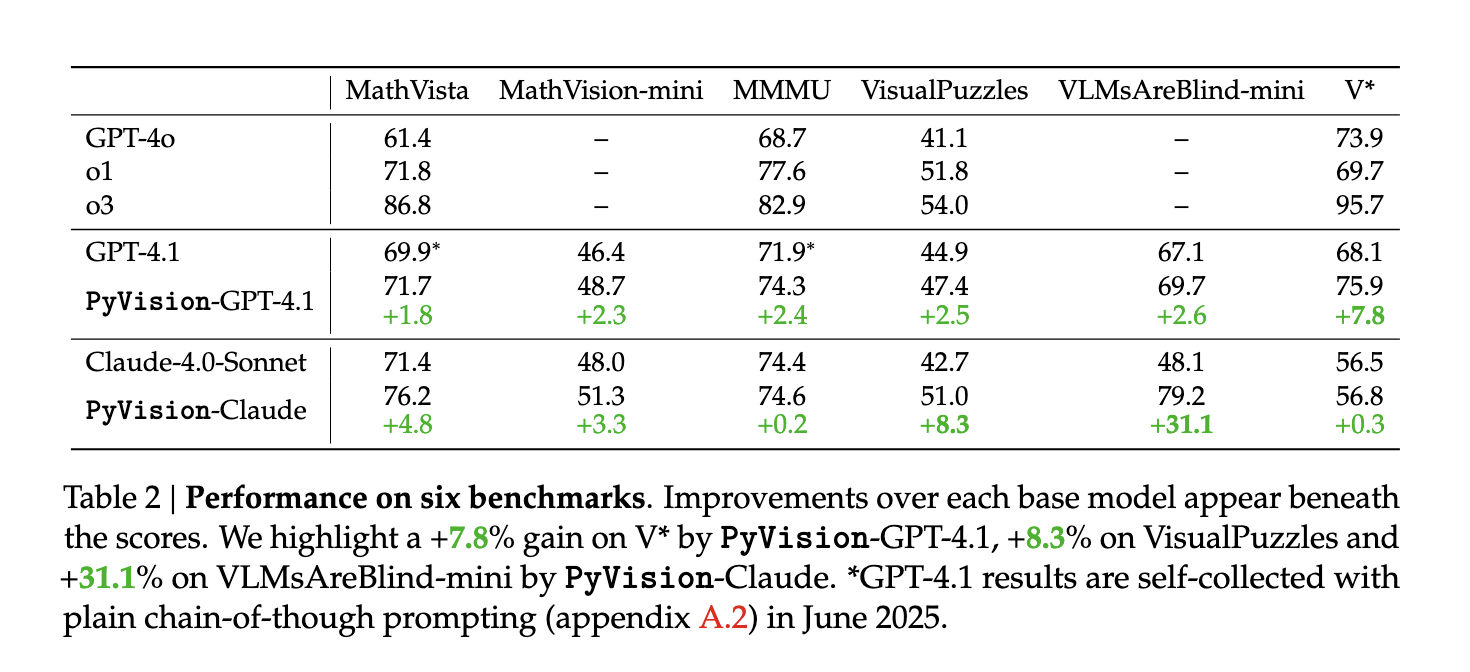

PyVision’s performance is confirmed by quantitative benchmarks. On the visual search benchmark V*, PyVision improved GPT-4.1’s performance from 68.1% to 75.9%, a gain of +7.8%. On the VLMsAreBlind Mini benchmark, Claude 4.0-Sonnet improved its accuracy from 48.1% (to 79.2%), a gain of 31.1%. Other tasks also showed gains: +2.4% for MMMU, +2.5% for VisualPuzzles in GPT-4.1 and +4.8% for MathVista. The improvements vary depending on the underlying model’s strengths—models that excel in perception benefit more from PyVision in perception-heavy tasks, while reasoning-strong models gain more in abstract challenges. PyVision amplifies a model’s strengths, not replaces or hides them.

The research presented here shows a significant advancement in the field of visual reasoning. PyVision overcomes a fundamental problem by enabling models create real-time tools for solving specific problems. It transforms static systems into agents capable of iteratively solving problems. PyVision’s dynamic linking of perception and reasoning is a crucial step towards building an intelligent AI that can adapt to complex visual problems.

Take a look at the Paper, GitHub Page The following are some examples of how to get started: Project. This research is the work of researchers.

The AI Dev newsletter is read by over 40k Devs, Researchers, and Developers from NVIDIA. OpenAI. DeepMind. Meta. Microsoft. JP Morgan Chase. Amgen. Aflac. Wells Fargo. [SUBSCRIBE NOW]

Nikhil has been an intern at Marktechpost. He has a dual integrated degree in Materials from the Indian Institute of Technology Kharagpur. Nikhil, an AI/ML fanatic, is constantly researching AI/ML applications for biomaterials and other biomedical fields. He has a background in Material Science and is always exploring advancements.