Every time you prompt an LLM, it doesn’t generate a complete answer all at once — it builds the response one word (or token) at a time. Each step of the process, the LLM predicts what token the next one could be. It does this by analyzing everything that has been written. But knowing probabilities alone isn’t enough — the model also needs a strategy to decide which token to actually pick next.

Different strategies can completely change how the final output looks — some make it more focused and precise, while others make it more creative or varied. This article will explore Text generation is a popular strategy used by LLMs.: Greedy Search, Beam Search, Nucleus SamplingThen, Temperature Sampling — explaining how each one works.

Greedy Search

Greedy Search decoding is a simple strategy that uses the most likely token given the current context. While it’s fast and easy to implement, it doesn’t always produce the most coherent or meaningful sequence — similar to making the best local choice without considering the overall outcome. It can be missed better sequences if it follows only one branch of the tree. This leads to boring, repetitive or generic text. It is not suitable for text-generation tasks with open ends.

Beam Search

Beam Search, an alternative to greedy search decoding strategies that only tracks one sequence at a time instead of keeping track of several possible ones (called beams), is a better strategy. This method expands top K sequences to allow the model explore multiple promising paths on the probability tree, and possibly discover more high-quality completions. The parameter K (beam width) controls the trade-off between quality and computation — larger beams produce better text but are slower.

It is not as effective in creating text for open-ended tasks. The algorithm favors high probability continuations, which leads to less variation and repetition. It is because of the algorithm that favors continuations with high probabilities, which results in less diversity. “neural text degeneration,” When the model is overusing certain words or phrases.

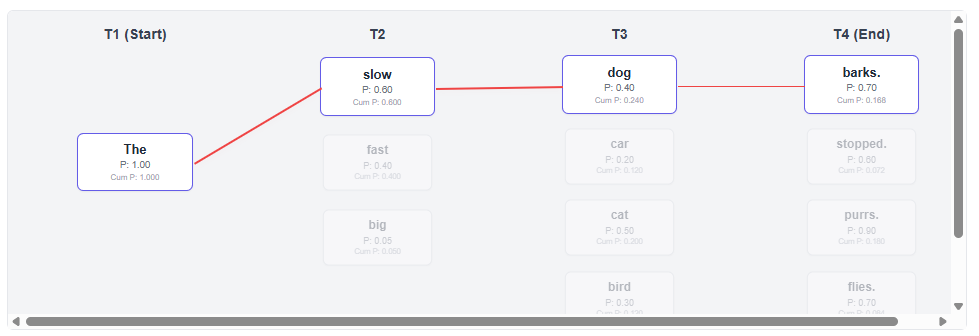

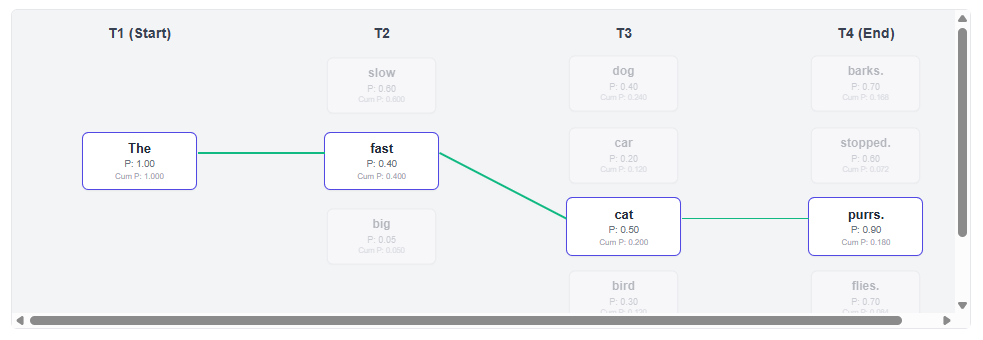

Find Greedy People:

Beam Search

- Greedy Search (K=1) Always take the highest probability local:

- The second option is to choose. “slow” Over (0.6) “fast” (0.4).

- Path: “The slow dog barks.” (Final Probability: 0.1680)

- Beam Search (K=2) Both are a good idea “slow” You can also find out more about the following: “fast” Paths alive

- It is a path that starts at T3. “fast” It has more potential to have a positive ending.

- Path: “The fast cat purrs.” (Final Probability: 0.1800)

Beam Search is able to explore a route that was initially lower in probability. This leads to an improved overall score.

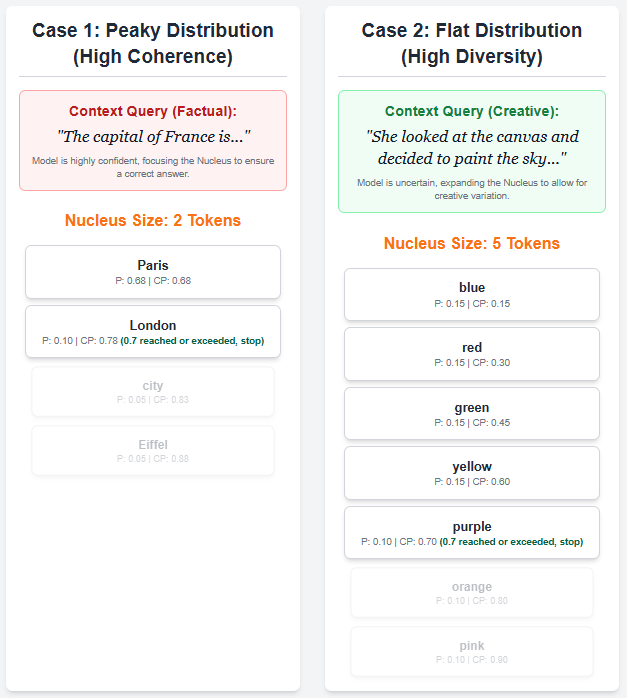

Top-p Sampling is a probabilistic strategy for decoding that adjusts the number of tokens considered at each stage. Top-p Sampling selects tokens that have a probability of p or greater (for example 0.7) instead of a predetermined number. These tokens constitute the “nucleus,” The next random sample is taken from the same set of tokens after normalizing their probability.

This allows the model to balance diversity and coherence — sampling from a broader range when many tokens have similar probabilities (flat distribution) and narrowing down to the most likely tokens when the distribution is sharp (peaky). The top-p method produces a text that is more diverse, natural and appropriate to the context than methods like beam or greedy.

Temperature Sampling

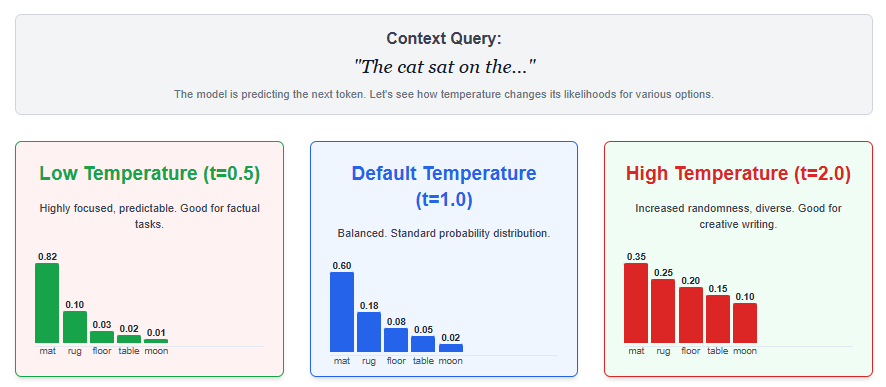

The temperature sampling function controls the randomness of text generation. It does this by changing the parameter (t), which is part of the softmax conversion from logits to probabilities. The lower the temperature parameter (t), the more random text generation will be.

Higher temperatures (t > 1) flatten the distribution, introducing more randomness and diversity but at the cost of coherence. Temperature sampling is a practical way to balance creativity with precision. Low temperatures produce predictable, deterministic outputs while high ones create more creative and varied text.

The optimal temperature often depends on the task — for instance, creative writing benefits from higher values, while technical or factual responses perform better with lower ones.