LLMs (large language models) are credited with reshaping AI reasoning. Self-consistency and parallel thinking methods have been cited by many as being pivotal advancements. These techniques are subject to a fundamental compromise: improving accuracy by sampling several reasoning paths comes at a high computational cost. Meta AI researchers and UCSD present DeepConf: Deep Thinking with ConfidenceThis trade-off is nearly eliminated by a new AI method. DeepConf delivers Modern reasoning with dramatic improvements in efficiency—achieving, for example, 99.9% accuracy The GPT-OSS120B is an open-source program that can be used to compete in the AIME 2025 Math Competition. The tokens generated are 85% less Use parallel thinking instead of the traditional approach.

Why DeepConf?

It is de facto the standard to boost LLM reasoning. To do this, generate several candidate answers and choose the most popular one. Although effective, the method can be a bit clunky. Diminishing returns—accuracy plateaus or even declines as more paths are sampled, because low-quality reasoning traces can dilute the vote. The cost of computing and time to generate hundreds or even thousands of reasoning traces for each query can be high.

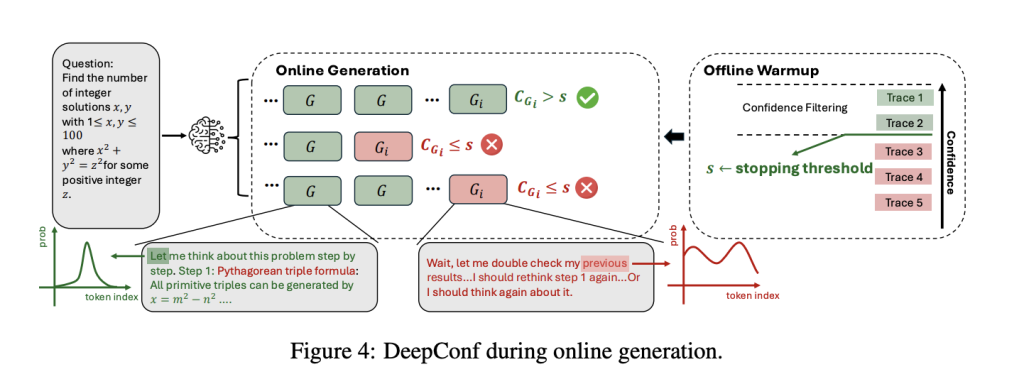

DeepConf addresses these issues by Utilising LLMs’ own signals of confidence. Rather than treating all reasoning traces equally, it dynamically filters out low-confidence paths—either during generation Online afterward (offline)—using only the most reliable trajectories to inform the final answer. This is a strategy that uses model-agnosticIt is required There is no need for hyperparameter tuning or trainingThe.NET Framework can be integrated with any model, or service framework.

DeepConf: A Guide to Confidence

DeepConf brings several innovations to the way confidence is used and measured.

- Token Confidence: Compute the log-probability for each generated token. It gives you a local measurement of the degree to which you can be certain.

- The Group Confidence Program: A sliding window of 2048 tokens (e.g.) can be used to provide a average token confidence, which provides an intermediate, smoothed signal.

- Tail Confidence For late breakdowns, pay attention to the last section of the reasoning path, as this is where answers are often found.

- Minimum Group Confidence: The segment with the lowest confidence in the trace is often the one that signals a collapse of the reasoning.

- The Bottom Percentile of Confidence The worst segments are the ones that most predict errors.

This information is then used in order to calculate the amount of money that you will need. weight votes (High confidence traces count more). The filter tracks (only the top η% most confident traces are kept). The In Online modeDeepConf will stop generating a trace once its confidence falls below a dynamically-calibrated threshold. This dramatically reduces wasted computation.

Key Results: Performance & Efficiency

DeepConf’s performance was assessed against multiple reasoning benchmarks, including AIME 2024/2025 (HMMT 2025), BRUMO25 (GPQA Diamond), and models, such as Qwen3-8B/32B (GPT-OSS-20B/120B), DeepSeek-8B (DeepSeek-8B), GPT-OSS-20B/120B (GPT-OSS-20B/120B), Results are stunning:

| Model | Dataset | Pass@1 Access Card | Cons@512 Acc | DeepConf@512 Ac | Tokens Saved |

|---|---|---|---|---|---|

| GPT-OSS-120B | AIME 2025 | 91.8% | 97.0% | 99.9% | -84.7% |

| DeepSeek-8B | AIME 2024 | 83.0% | 86.7% | 93.3% | -77.9% |

| Qwen3-32B | AIME 2024 | 80.6% | 85.3% | 90.8% | -56.0% |

Performance Boost: DeepConf increases accuracy of models by as much as 80%. 10 percentage points Over standard majority voting often exceeding the upper limit of benchmark.

Ultra-efficient: DeepConf, by early stopping low-confidence traces reduces the overall number of generated tokens 43–85%The final accuracy is not affected (and in many cases, it’s improved).

Plug & play: DeepConf works out of the box with any model—no fine-tuning, no hyperparameter search, and no changes to the underlying architecture. It can be dropped into an existing stack of serving (e.g. vLLM). 50 lines of code.

Easy to deploy: This method can be implemented by existing inference engines as a light-weight extension. It only requires token-level logprobs, and some logic to calculate confidence and to stop early.

Simple Integration: Minimal Code, Maximum Impact

DeepConf is easy to implement. Changes to vLLM’s implementation are small.

- Logprobs Processor Extended Track sliding-window reassurance

- Add early-stop checks Before emitting every output, please read the following.

- Pass the confidence thresholds API allows models to be retrained without requiring any additional training.

It is easy to implement in production with just one additional setting.

The conclusion of the article is:

Meta AI’s DeepConf is a Meta AI product. Jump forward LLM reasoning delivers peak accuracy as well as unprecedented efficiency. DeepConf’s dynamic leveraging of the model’s internal confidence allows it to achieve what open-source models could not: Near-perfect performance on tasks requiring elite reasoning at a fractional cost.

FAQs

FAQ 1: What are the benefits of DeepConf over majority voting in terms of accuracy and efficiency?

DeepConf’s confidence-aware voting and filtering prioritize traces that have higher model certainty. This can boost accuracy up to 10 percent points compared with majority voting. The early termination of lowconfidence traces reduces token usage to up to 85%, resulting in massive gains for both performance and efficiency.

FAQ 2: Does DeepConf work with all language models or serving frameworks?

Yes. DeepConf is fully model-agnostic and can be integrated into any serving stack—including open-source and commercial models—without modification or retraining. The deployment requires minimal code changes (50 for vLLM). Token logprobs are used to calculate confidence and manage early stopping.

FAQ 2: Do I need to retrain, use special data or perform complex tuning with DeepConf?

No. DeepConf is entirely inference-time and does not require any additional training of models, tuning, or searches for hyperparameters. The only outputs are the logprobs, which work immediately and with all standard API settings. It is scalable and robust and can be deployed on real workloads, without interruption.

Click here to find out more Paper You can also find out more about the following: Project Page. Check out our website to learn more. GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter Join our Facebook group! 100k+ ML SubReddit Subscribe Now our Newsletter.

Asif Razzaq, CEO of Marktechpost Media Inc. is a visionary engineer and entrepreneur who is dedicated to harnessing Artificial Intelligence’s potential for the social good. Marktechpost was his most recent venture. This platform, which focuses on machine learning and deep-learning news, is technically solid and accessible to a broad audience. Over 2 million views per month are a testament to the platform’s popularity.