Artificial intelligence advances are closing the distance between digital reasoning, and interaction in real life. At the forefront of this progress is embodied AI—the field focused on enabling robots to perceive, reason, and act effectively in physical environments. As industries look to automate complex spatial and temporal tasks—from household assistance to logistics—having AI systems that truly understand their surroundings and plan actions becomes critical.

RoboBrain v2.0: A breakthrough in Embodied Vision Language AI

Beijing Academy of Artificial Intelligence, BAAI. RoboBrain 2 The RoboBrain 2.0 is an important milestone in designing foundation models for robots and embodied AI. RoboBrain 2.0 is a new AI model that unites spatial perception, high levels of reasoning and long term planning in a single architecture. This versatility allows for a variety of embodied tasks including affordance prediction and spatial object location, as well as trajectory planning and multi-agent collaborative work.

RoboBrain 2.0 – Key Highlights

- There are Two versions of the scalable version: The 7B model is a resource-efficient variant with a high-performance 32B version for demanding tasks.

- Unified Multi-Modal Architecture Couples an encoder for high resolution vision with a language-only decoder, allowing seamless integration between images, videos, text instructions and scene graphs.

- Advance Spatial and Temporal reasoning: Exhibits exceptional abilities in tasks that require a thorough understanding of objects, motion forecasting and multi-step, complex planning.

- Open-Source Foundation: RoboBrain 2.0 was built on the FlagScale platform for ease of research adoption and reproducibility.

What RoboBrain 2 is: architecture and training

Multi-Modal Input Pipeline

RoboBrain ingests a mix of symbolic and sensory data.

- Multi-View Images & Videos: For rich spatial context, supports high resolution, third-person, and egocentric visual streams.

- Please see the following instructions in Natural Language: It can interpret many commands ranging from basic navigation instructions to complex manipulative instructions.

- Scene Graphs: Structured representations of objects and their relationships. Also, environmental layouts.

The system is a tokenizer A specialized encoder is used for a specific language or scene. vision encoder It uses adaptive positional encoders and windows of attention for processing visual data. Multi-layer perceptrons project visual elements into the model space, creating unified and multimodal token sequences.

Three-Phase Training Process

RoboBrain achieves its embedded intelligence by a three-phase progressive training program:

- The Foundations of Spatiotemporal Understanding: This course builds core language and visual abilities, allowing for spatial perception as well as basic time understanding.

- Embodied Task Enhancement: Improves model using real-world datasets and multi-view videos. This is useful for 3D affordance analysis and robot-centric scenes.

- Chain-of-Thought Reasoning: Uses diverse activity traces to explain step-bystep reasoning. Task decompositions are also used.

Research and Deployment Infrastructure Scalable

RoboBrain 2.0 leverages RoboBrain’s technology. FlagScale platform, offering:

- Parallelism hybrid Use of computing resources efficiently

- High-throughput data pipes and pre-allocated RAM Reduce training costs, and reduce latency

- Automatic fault tolerance To ensure the stability of large-scale distributed system

The infrastructure allows rapid training of models, simple experimentation and deployment to real-world robot applications.

Applicability and Performance in Real-World Environment

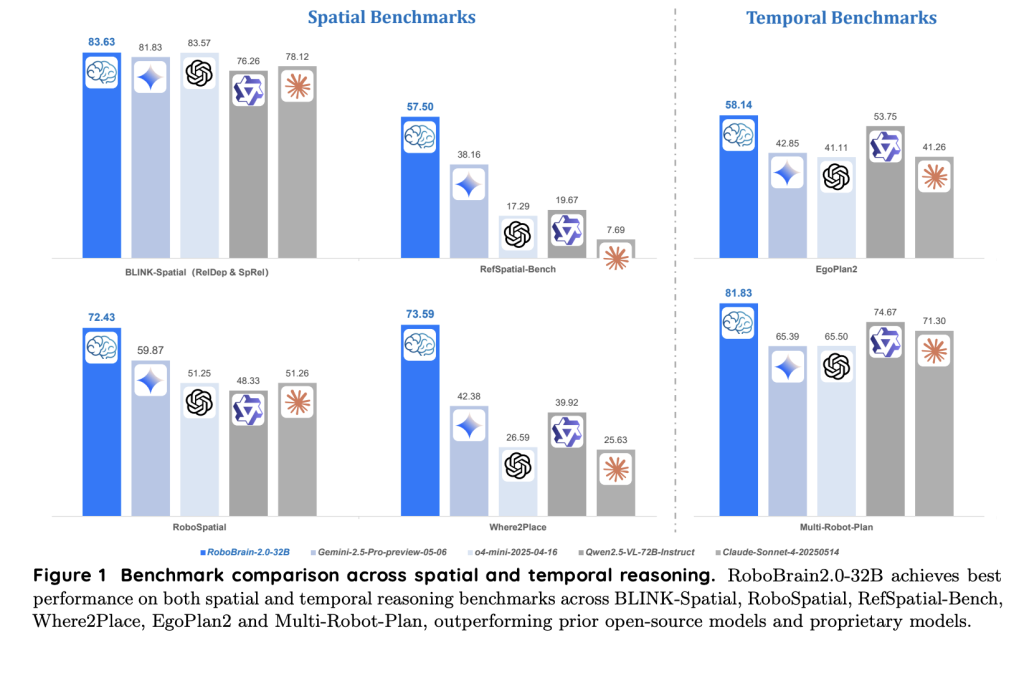

RoboBrain 2.0 has been evaluated against a wide range of AI benchmarks. It consistently outperforms both proprietary and open-source models for spatial reasoning and time. The key capabilities are:

- Budget Prediction The functional areas of an object to grasp, push, or interact

- Precise Object Localization & Pointing: Following textual directions to accurately find and point out objects or empty spaces in complex scenes

- Tracking Forecasting End-effector planning that takes into account obstacles and is efficient

- Multi-Agent Planning: Organise robots to achieve collaborative goals by decomposing the tasks.

RoboBrain’s open-access, robust design is immediately applicable to applications such as household robotics and industrial automation.

The Potential of Embodied AI Robotics

RoboBrain 2.0, by unifying visual-language understanding with interactive reasoning and robust planing, sets new standards for embodied AI. The modular and scalable architecture of RoboBrain 2.0, as well as the open-source recipes for training it facilitates innovation in robotics and AI. RoboBrain 2.0 provides a strong foundation to tackle the most difficult spatial and temporal problems, regardless of whether you are an AI researcher or engineer automating tasks.

Click here to find out more Paper You can also find out more about the following: Codes. This research is the work of researchers on this project.| The AI Dev newsletter is here! You can read more about it by clicking here Over 40k developers There are researchers from NVIDIA and OpenAI. Meta, Microsoft and JP Morgan Chase. Amgen, Aflac and Wells Fargo. [SUBSCRIBE NOW]

Nikhil works as an intern at Marktechpost. He has a dual integrated degree in Materials from the Indian Institute of Technology Kharagpur. Nikhil has a passion for AI/ML and is continually researching its applications to fields such as biomaterials, biomedical sciences, etc. He has a background in Material Science and is always exploring advancements.