The generative AI race has long been a game of ‘bigger is better.’ The conversation has shifted from the raw number of parameters to the architectural efficiency as power consumption and memory limitations are reached. The Liquid AI Team is leading the charge in this regard with its release of LFM2-24B-A2BA 24-billion-parameter model redefines the expectations we have for edge-capable AI.

The ‘A2B’ Architecture: A 1:3 Ratio for Efficiency

The ‘A2B’ in the model’s name stands for Attention-to-Base. Softmax attention is applied to every layer in a Transformer.2This leads to massive KV (Key-Value) caches that devour VRAM. It leads to huge KV caches (Key Value) that consume VRAM.

By using hybrid structures, the LiquidAI team is able to avoid this. The ‘Base‘ layers are efficient Short convolution gatesWhile the ‘Attention‘ layers utilize GQA stands for Grouped Question Attention.

Model LFM2-24B A2B has a ratio of 1:3.

- Total Number of Layers 40

- Convolution blocks: 30

- Attention: 10

Interspersing GQA blocks and gated convolutions, this model has the same high-resolution retrieval as a Transformer and the reasoning power of an LS model.

Save Money on Intelligence: 24B for a budget of only 2B

LFM2-24B A2B’s most significant feature is its durability. Mixed of Experts design. The model has 24 billion parameters but only actives a few. The 2.3 billion parameter per token.

The deployment of this model is now a whole new game. Models can be compacted into smaller spaces because of the lean active parameter path. 32GB RAM. The A100 can therefore be used locally by high-end laptops for consumers, desktops equipped with integrated GPUs and NPUs. The model delivers the same knowledge density as a 24-B model, but with faster inference and lower energy consumption.

Benchmarks: Punching Up

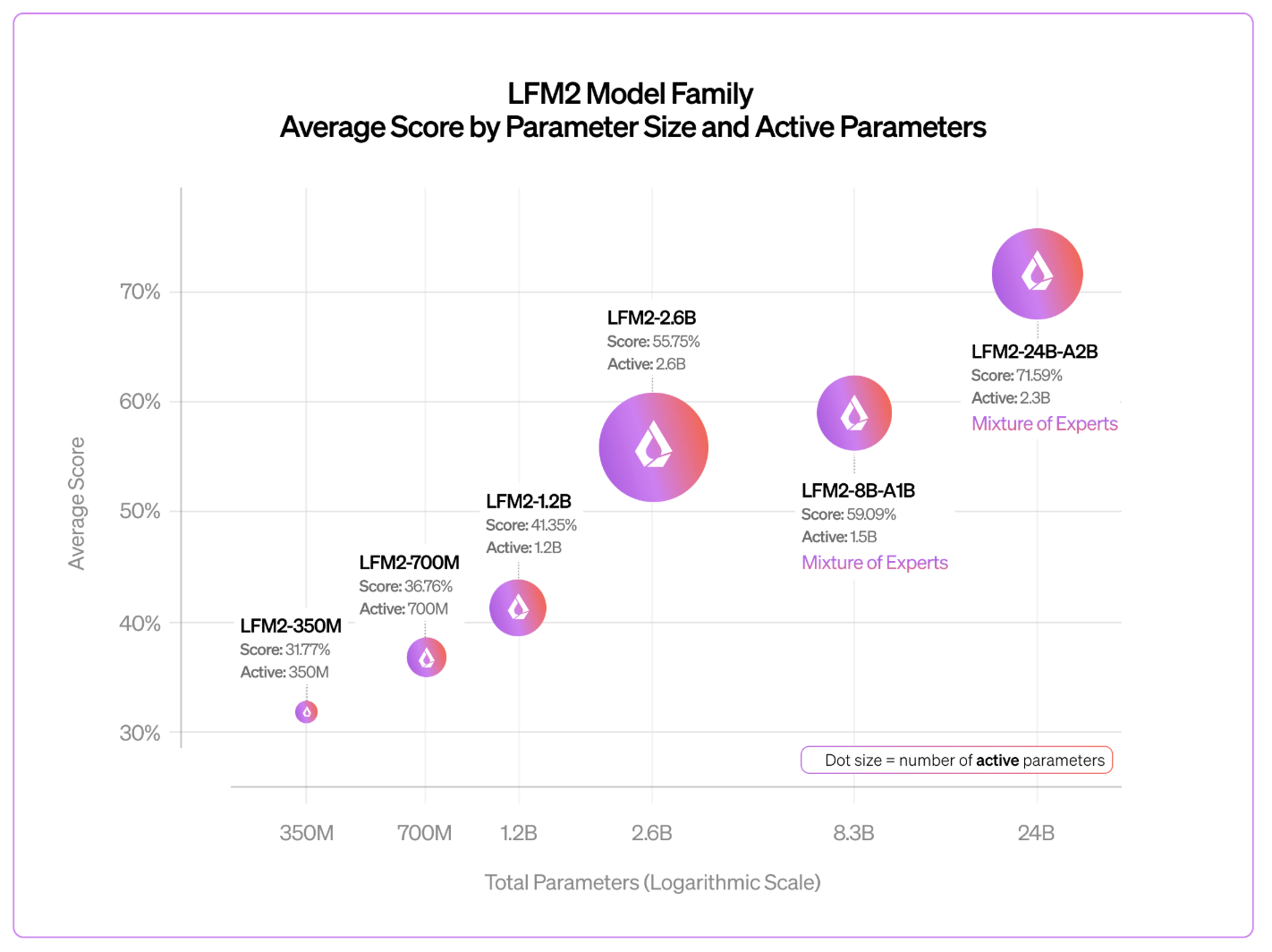

The Liquid AI Team reports that LFM2 follows a log-linear, predictable scaling behavior. The 24B-A2B outperforms its larger competitors despite having fewer active parameters.

- Logical Reasoning and Logic Testing like GSM8K The following are some examples of how to get started: MATH-500, it rivals dense models twice its size.

- Throughput: Benchmarking was performed on one NVIDIA GPU H100. The vLLMIt reached 26.8K total tokens per second Snowflake, with 1,024 simultaneous requests, is far outpacing Snowflake. gpt-oss-20b The following are some examples of how to get started: Qwen3-30B-A3B.

- Long Context This model is a 32k Token context window optimized for RAG pipelines (Retrieval – Augmented Generation) and local document analyses.

Tech Cheat Sheet

| The Property | Specifications |

| Total Parameters | 24 Billion |

| Active Parameters | 2.3 Billion |

| Architecture | Hybrid Conv (Gated + GQA). |

| Layers | 30 (30 Base/10 Attention) |

| Context Length | 32,768 Tokens |

| Train the Trainer | 17 Trillion Tokens |

| License | LFM Open License V1.0 |

| Native Support | llama.cpp, vLLM, SGLang, MLX |

The Key Takeaways

- Hybrid ‘A2B’ Architecture: This model is based on a ratio of 1:3. GQA stands for Grouped Question Attention The following are some of the ways to get in touch with us: Gated short Convolutions. By utilizing linear-complexity ‘Base’ layers for 30 out of 40 layers, the model achieves much faster prefill and decode speeds with a significantly reduced memory footprint compared to traditional all-attention Transformers.

- Sparse MoE Efficiency: Even though you have There are 24 total parameters.The model is only active when the button is pressed The 2.3 billion parameter per token. This ‘Sparse Mixture of Experts’ design allows it to deliver the reasoning depth of a large model while maintaining the inference latency and energy efficiency of a 2B-parameter model.

- True Edge Capability The model was optimized via hardware-in the-loop search. It is made to fit into any space. 32GB RAM. The software can now be installed on any consumer hardware including laptops that have integrated NPUs and GPUs.

- State-of-the-Art Performance: LFM2-24B-A2B outperforms larger competitors like Qwen3-30B-A3B The following are some examples of how to get started: Snowflake Gpt-oss-20b Benchmarks show it hits approximately in terms of throughput. Benchmarks shows it hitting approximately 26.8K tokens per second On one H100 we see a near-linear scaling, and high performance in tasks with long contexts up to the limit of its capability. 32k token window.

Click here to find out more Technical details The following are some examples of how to get started: Model weights. Also, feel free to follow us on Twitter Don’t forget about our 120k+ ML SubReddit Subscribe Now our Newsletter. Wait! What? now you can join us on telegram as well.