In deep learning, classification models don’t just need to make predictions—they need to express confidence. Softmax’s activation function is the answer. Softmax transforms the unbounded raw scores of the neural networks into a probability distribution that can be interpreted as the likelihood for a particular class.

Softmax is a key component in multi-class classification problems, which range from language modeling to image recognition. This article will help you gain a better understanding of Softmax and its workings. See the FULL CODES here.

Naive Softmax Implementation

Import torch

def softmax_naive(logits):

exp_logits = torch.exp(logits)

return exp_logits / exp_logits.sum(dim=1, keepdim=True)The Softmax is implemented in the simplest form. The function normalizes the logit values by adding up all of their exponentiations across classes.

While this implementation is mathematically correct and easy to read, it is numerically unstable—large positive logits can cause overflow, and large negative logits can underflow to zero. It is therefore recommended that this version not be used in actual training pipelines. Click here to view the FULL CODES here.

Sample Logits, Target Labels and Other Products

This example illustrates both failure and normal cases using three samples. First and third samples have reasonable logit values, and they behave in the expected way during Softmax calculation. The second sample intentionally includes extreme values (1000 and -1000) to demonstrate numerical instability—this is where the naive Softmax implementation breaks down.

You can use the targets tensor, which specifies the correct index class for each sample to observe the propagation of instability during backpropagation and compute the classification loss. Click here to view the FULL CODES here.

Samples of three classes, each batch comprises 3 samples

Les logits sont les torch.tensor ([

[2.0, 1.0, 0.1],

[1000.0, 1.0, -1000.0],

[3.0, 2.0, 1.0]

], requires_grad=True)

Targets = Torch.tensor[0, 2, 1])Softmax: Output and Failure Case

The naive Softmax is used to calculate class probabilities during the forward pass. The output for normal logit (first and second samples) is a valid distribution of probabilities where the values are between 0 to 1 and add up to 1.

The second example clearly reveals the problem: multiplying 1000 by the overflow to InfinityWhile -1000 streams to zero. It results in incorrect operations, which produce NaN values as well as zero probabilities. This results in invalid operations during normalization, producing NaN values and zero probabilities. NaN If a bug appears in this phase, the model is rendered unusable as a training tool. Click here to view the FULL CODES here.

# Forward pass

probs = softmax_naive(logits)

print("Softmax probabilities:")

print(probs)The Target Probabilities of Loss and the Breakdown

The predicted probability for the class of each sample is extracted here. The first two samples have valid target probabilities. However, the second one has a 0.0 probability due to a numerical underflow during the Softmax calculation. If the loss is computed using -log(p)If you take the logarithm 0.0, it results in +∞.

The overall loss becomes infinite. This is a major failure in training. Gradient computation is unstable once the loss is infinite. Ns Backpropagation is a way to stop the learning process. Take a look at the FULL CODES here.

# Calculate the probability of a target being hit

Target_probs = "probs"[torch.arange(len(targets)), targets]

print("nTarget probabilities:")

print(target_probs)

# Compute loss

loss = -torch.log(target_probs).mean()

print("nLoss:", loss)Backpropagation: Gradient Corruption

The impact of infinite losses is immediately apparent when backpropagation occurs. Because their Softmax outputs behaved well, the gradients of the first and third sample remain finite. Due to the loss’ log(0) function, the gradients for the second sample are NaN across all classes.

The NaNs spread backwards through the network and disrupt training. This is why numerical instability at the Softmax–loss boundary is so dangerous—once NaNs appear, recovery is nearly impossible without restarting training. See the FULL CODES here.

loss.backward()

print("nGradients:")

print(logits.grad)Number Instability: Its Causes and Consequences

Softmax is not the same as cross-entropy. This separation creates an exponential underflow or overflow, which can cause a significant numerical instability risk. The large logits may push the probabilities towards infinity and zero. This can lead to log(0), which leads to NaN gradients. At production scale, this is not a rare edge case but a certainty—without stable, fused implementations, large multi-GPU training runs would fail unpredictably.

Computers cannot store infinitely big or small numbers. Floating point formats such as FP32 place strict restrictions on the size of a stored value. Softmax calculates exp (x) and large positive values are so large that the number they represent exceeds its maximum limit, resulting in an infinite value. However, large negative values become so small that their result is zero. If a number becomes zero or infinity, then division and logarithms will produce incorrect results. Click here to see the FULL CODES here.

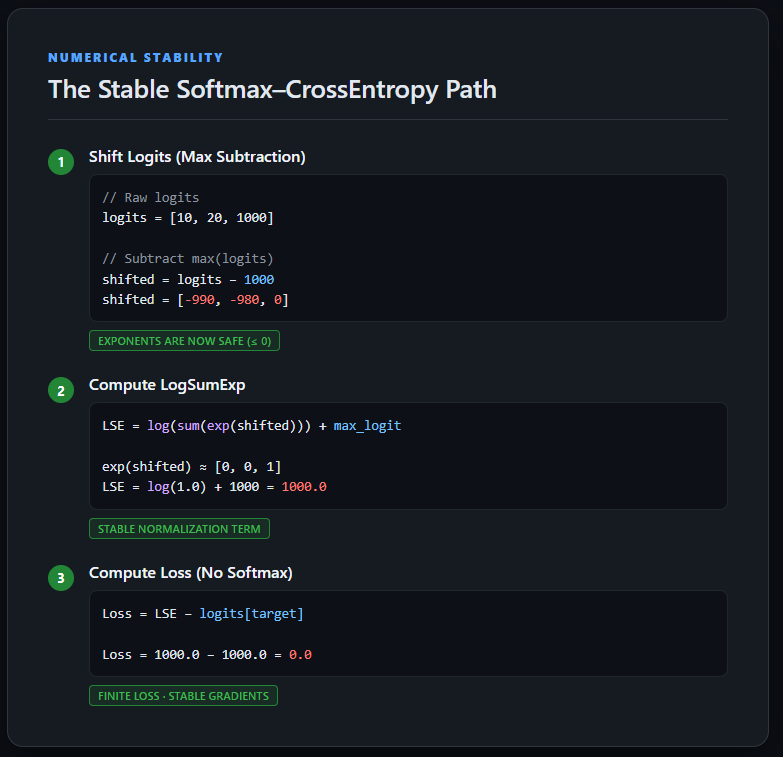

Implementing Stable Cross-Entropy Loss Using LogSumExp

The implementation calculates the cross-entropy directly using raw logits, without having to explicitly compute Softmax probabilities. In order to ensure numerical stability, logits are first subtracted from the maximum per-sample value, which keeps exponentials within safe limits.

This trick uses the LogSumExp to calculate the normalization factor, and then subtracts the unshifted target logit to arrive at the right loss. The approach used here avoids NaN gradients and overflows. It also mirrors the way cross-entropy works in deep-learning frameworks. Visit the FULL CODES here.

def stable_cross_entropy(logits, targets):

How to find the maximum logit per Sample

max_logits, _ = torch.max(logits, dim=1, keepdim=True)

Number Shift Logits for Numerical Stability

shifted_logits= logits-max_logits

Calculate LogSumExp

log_sum_exp = torch.log(torch.sum(torch.exp(shifted_logits), dim=1)) + max_logits.squeeze(1)

# Compute loss using ORIGINAL logits

loss = log_sum_exp - logits[torch.arange(len(targets)), targets]

return loss.mean()Pass Stable for Forward and Backward

The stable cross-entropy is implemented on extreme logits to produce a loss that’s finite and gradients with defined values. The LogSumExp formula keeps intermediate calculations within a reasonable numerical range, even though the sample has very large values (1 000 and -1 000). The backpropagation is completed without NaNs and the gradient signals are meaningful for each class.

This confirms that the instability seen earlier was not caused by the data itself, but by the naive separation of Softmax and cross-entropy—an issue fully resolved by using a numerically stable, fused loss formulation. Click here to see the FULL CODES here.

Logits = Torch.tensor[

[2.0, 1.0, 0.1],

[1000.0, 1.0, -1000.0],

[3.0, 2.0, 1.0]

], requires_grad=True)

Targets = Torch.tensor[0, 2, 1])

loss = stable_cross_entropy(logits, targets)

print("Stable loss:", loss)

loss.backward()

print("nGradients:")

print(logits.grad)

You can also read our conclusion.

Many training problems are caused by the difference between math formulas and code. Softmax, cross-entropy, and their mathematical definitions are well defined, but the naive implementation of IEEE 754 hardware ignores its finite precision limitations, resulting in underflow and excess.

Simple but crucial: move logits to the front before you exponentiate and work in log whenever possible. Most importantly, training rarely requires explicit probabilities—stable log-probabilities are sufficient and far safer. It’s a common sign of Softmax being manually calculated when a loss in production suddenly becomes NaN.

Click here to find out more FULL CODES here. Also, feel free to follow us on Twitter Join our Facebook group! 100k+ ML SubReddit Subscribe now our Newsletter. Wait! What? now you can join us on telegram as well.

Our latest releases of ai2025.devThe platform is aimed at 2025 and turns the model launches, benchmarks and ecosystem activities into structured data that you can compare, filter and export.