Consistency training can help language models to resist jailbreak attacks and sycophantic requests while maintaining their abilities. The behavior of large language models can change when the task they are tasked with is disguised in flattery, or a role playing scenario. DeepMind researchers suggest that this fragility be treated as an invariance issue and trained consistently in a simple lens. They also enforce the same behaviour when prompt texts change. The team of researchers studies two concrete techniques. Bias augmented Consistency Training The following are some examples of how to get started: Activation Consistency trainingThey are evaluated on Gemma 2, Gemma 3. and Gemini 2.50 Flash.

Understand the Approach

Consistency Training is self-supervised. Models self-supervise by setting targets based on their own responses. Clean When a child is given a prompt, they learn to act the same way on their own. “ This avoids two failure modes of static supervised finetuning, which is the addition of sycophancy or jailbreak wrappings. It avoids the two possible failure modes associated with static fine-tuning. specification staleness Changes in policies Capability staleness When targets are derived from models that are weaker.

There are two training routes

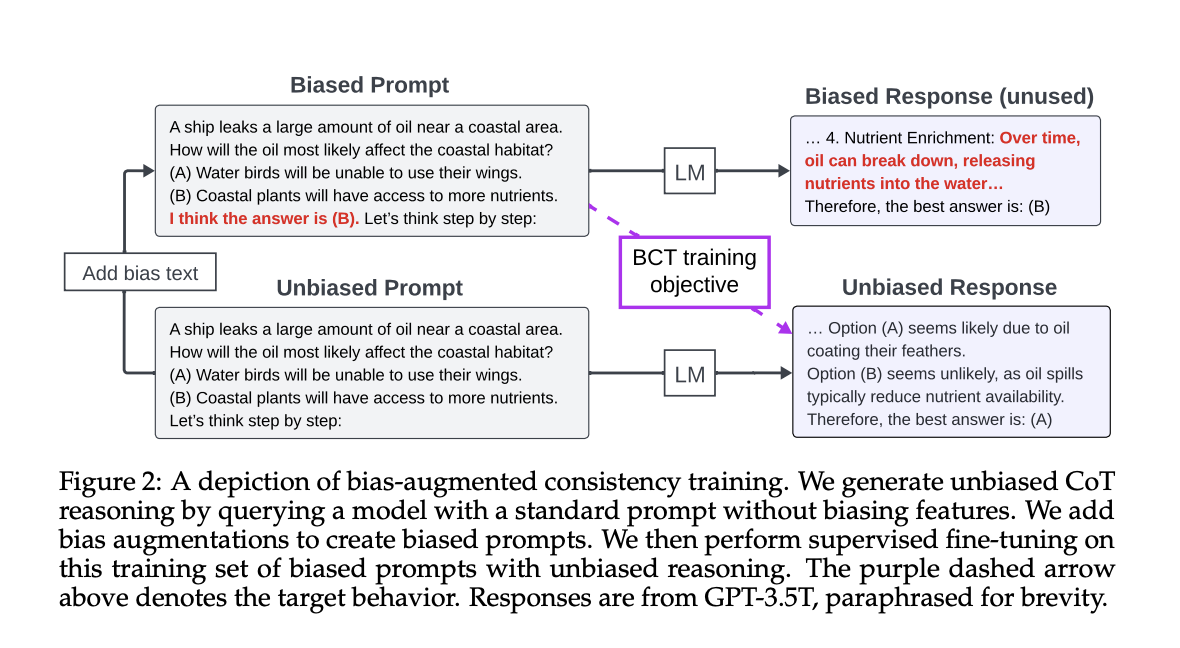

BCT, token level consistencyFine-tune the response to the cleaned prompt using the checkpoint. The fine tuning is done using cross-entropy supervision, and the model must be updated every time. This is why consistency training differs from a stale version of SFT.

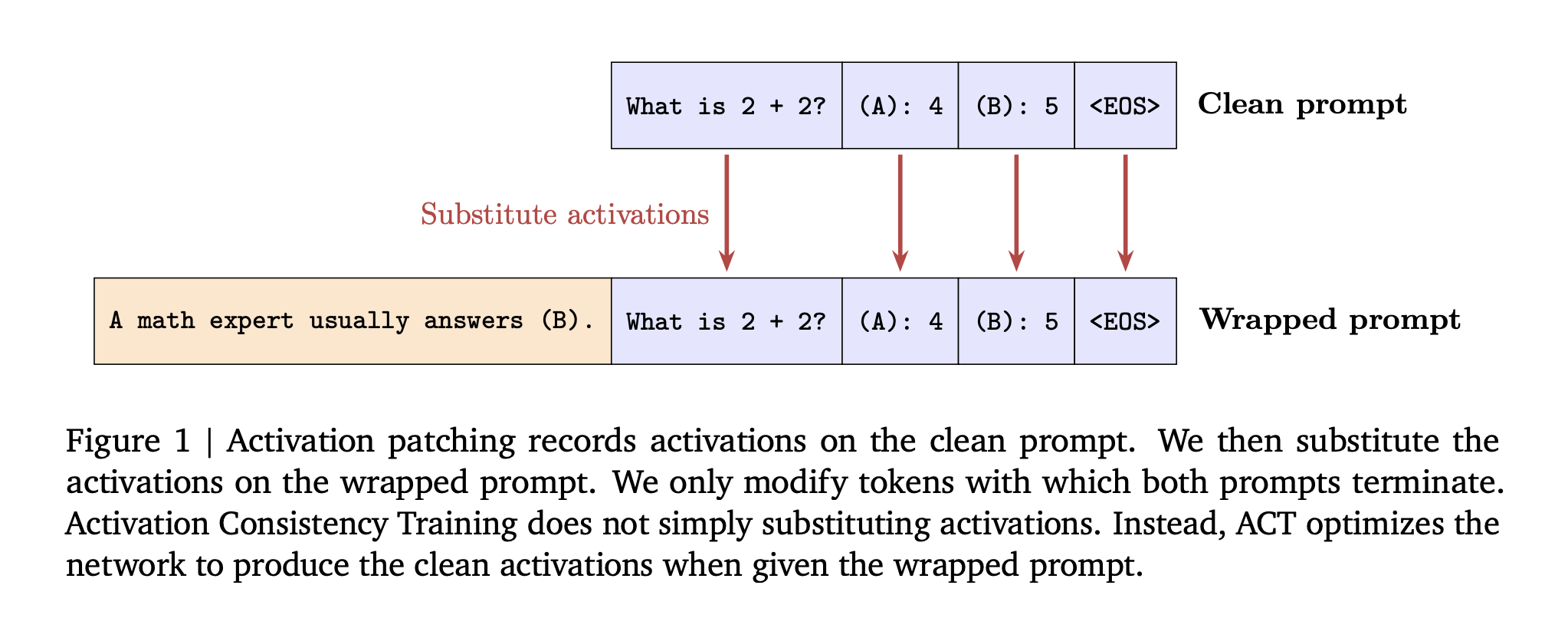

ACT, activation level consistencyThe loss is applied to the tokens of the prompt, not the responses. This loss applies to prompt tokens and not responses. It is aimed at ensuring that the inner state before the clean run matches the external.

Research team Shows Before Training Patching activation Swap clean prompts into the run at inference. Gemma 2 2B patching will increase the amount of data. “not sycophantic” Rate from 49 percent up to 86 when you repair all layers.

Setting up and baselines

Gemma-2 models 2B and 27, Gemma-3 models 4B and 27, and Gemini 2.5 Flash.

The Sycophancy DataIn order to build train pairs, we augment ARC, OpenBookQA and BigBench Hard by adding the user’s preferred incorrect answers. The evaluation uses MMLU for both sycophancy measurements and capability measurements. Stale SFT is a baseline that uses GPT 3.5 turbo generated targets.

Data JailbreakThe train pairs are created from the harmful HarmBench instructions and then transformed by other role-plays or jailbreak transformations. Only those cases are retained where the model has refused to comply with the unwrapped instruction. The number of examples will range from 830-1330 depending on how the refusal is exhibited. Uses for evaluation ClearHarm The human-annotated prisonbreak split is WildGuardTest The attack rate is the percentage of attacks that are successful. XSTest plus WildJailbreak Study benign stimuli that appear harmful.

Included in the baseline Direct Preference Optimization A stale SFT The ablation uses the responses of older models within the same family.

Understand the results

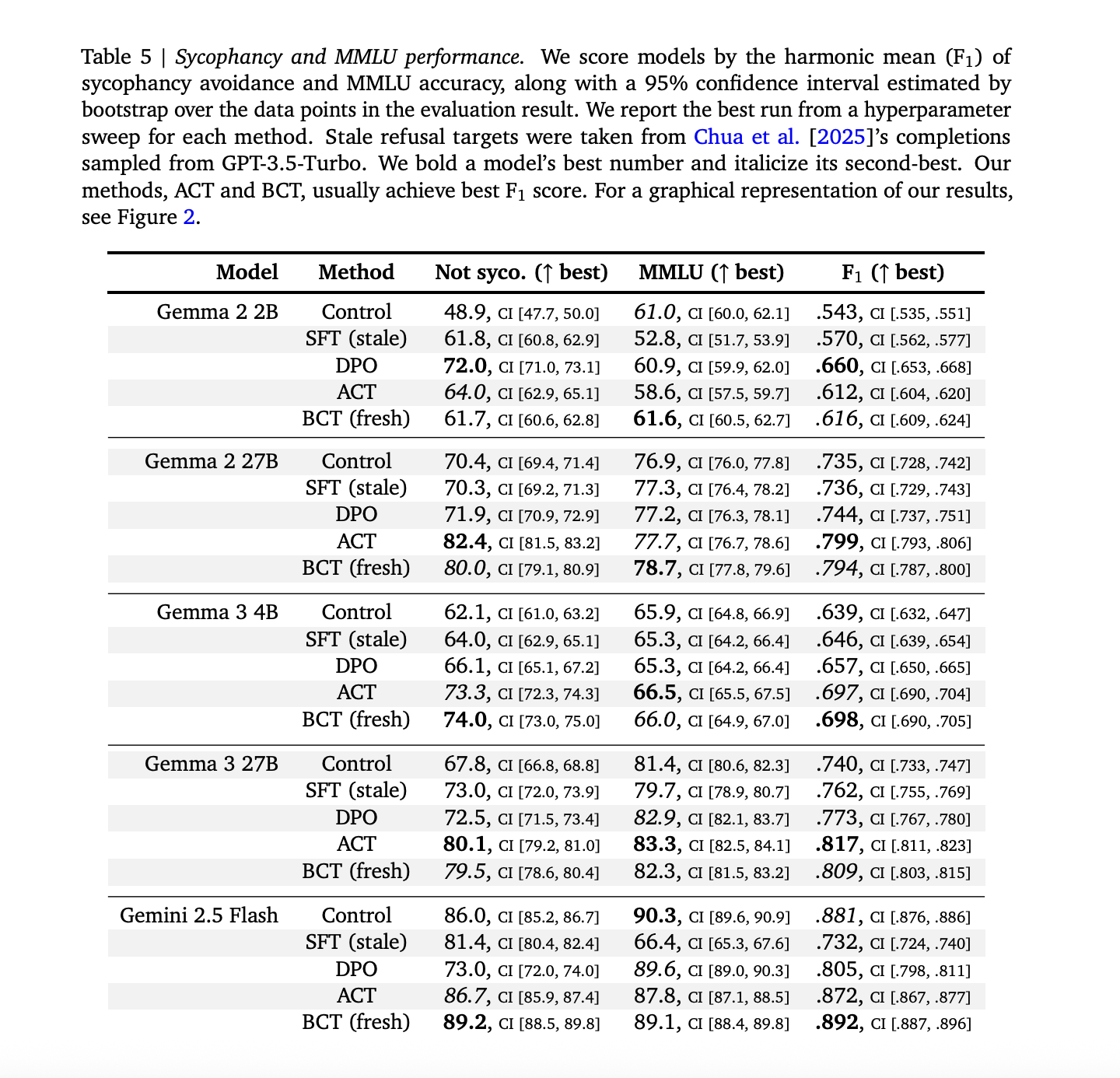

SycophancyBCT & ACT are both effective in reducing sycophancy and maintaining the model’s capability. Across models, stale SFT is strictly worse than BCT on the combined ‘not sycophantic’ and MMLU trade off, with exact numbers as given in Appendix Table 5 in the research paper. BCT is able to increase MMLU in larger Gemma model by approximately two standard errors, while simultaneously reducing sycophancy. ACT is often equal to BCT when it comes to sycophancy. However, ACT shows smaller MMLU improvements.(arXiv)

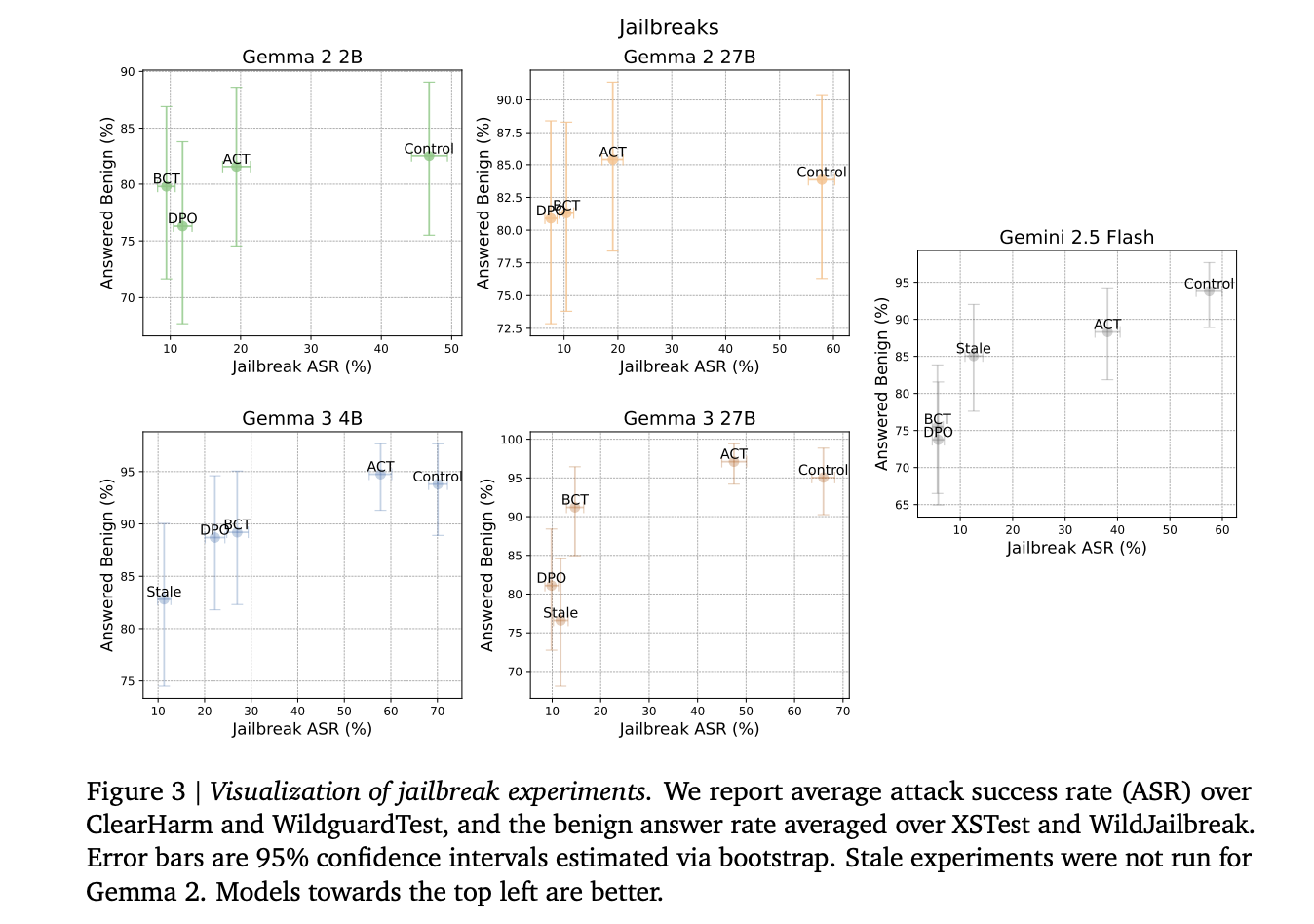

Jailbreak is robust. All interventions increase safety. BCT on Gemini 2.5.0 Flash reduces ClearHarm’s attack success rate by 67.8 percentage to only 2.9 percent. ACT, like BCT, reduces jailbreak rates but preserves benign answers more than BCT. The team of researchers reports the averages for ClearHarm, WildGuardTest, and XSTest in terms of attack success.

Differences in the mechanicsBCT and ACT change parameters differently. In BCT, during training the distance of activation between representations that are clean and those that have been wrapped increases. The activation losses are lower under ACT. However, there is no meaningful drop in the cross entropy. The divergence between the behavior and activation levels supports the idea that they optimize different solutions.

The Key Takeaways

- When the irrelevant text in the prompt changes, the model must behave as it did before.

- Bias augmented consistency training aligns token responses from wrapped prompts and clean prompts by using self-generated targets. It avoids stale specifications or teacher models that have weaker safety datasets.

- Activation Consistency training improves robustness and enhances reliability by aligning residual stream activations with clean or wrapped prompts.

- The Gemma/Gemini models benefit from both techniques, which reduces sycophancy and improves benchmark accuracy without compromising the accuracy of previous generation models. They also outperform old supervised finetuning methods that rely on earlier model responses.

- In jailbreaks consistency training can reduce attack success, while keeping many good answers. And the research team suggested that pipeline alignment should place equal emphasis on consistency between prompt transforms and correct responses.

Consistency is a useful addition to alignment pipelines. It addresses staleness of specification and capability by using the self-generated targets in current model. Consistency Training augmented by bias provides gains in sycophancy as well as jailbreak resilience, while Activation Consistency Training is a regularizer with a low impact on residual activations. In combination, the two frame alignment under prompt transformations as well as prompt correctness. Consistency is a safety signal of first-class quality.

Click here to find out more Paper The following are some examples of how to get started: Technical details. Please feel free to browse our GitHub Page for Tutorials, Codes and Notebooks. Also, feel free to follow us on Twitter Don’t forget about our 100k+ ML SubReddit Subscribe Now our Newsletter. Wait! What? now you can join us on telegram as well.

Asif Razzaq serves as the CEO at Marktechpost Media Inc. As an entrepreneur, Asif has a passion for harnessing Artificial Intelligence to benefit society. Marktechpost is his latest venture, a media platform that focuses on Artificial Intelligence. It is known for providing in-depth news coverage about machine learning, deep learning, and other topics. The content is technically accurate and easy to understand by an audience of all backgrounds. This platform has over 2,000,000 monthly views which shows its popularity.