Robbyant (the embodied AI division within Ant Group) has released LingBot World. This large-scale world model turns video production into an interactive simulator that can be used for games, autonomous driving or embodied agent simulations. The system was designed to create environments that are controllable with high visual quality, dynamic and long time horizons. It is also responsive for real-time control.

The world of text is now available in video format

The majority of text-to-video models produce short videos that appear realistic, but act like passive films. These models don’t model the way actions affect the environment in time. LingBot World was built to be an action-conditioned model of the world. The model learns how a virtual environment transitions so that camera movement and keyboard inputs drive future frames.

It learns to conditionally distribute future video tokens based on previous frames, prompts for language and discrete action. During training, the model can predict sequences of up to 60 seconds. It can auto-regressively assemble coherent video streams up to 10 minutes long, maintaining scene structure.

Data Engine, Interactive Trajectories to Web Video

LingBot World is designed around a data engine that can be accessed from anywhere. The system provides a rich and aligned view of how different actions affect the world, while also covering various real-life scenes.

This pipeline is composed of 3 data sources.

- Videos of people, animals, and vehicles filmed from first-person and third-person perspectives.

- RGB frame data is paired strictly with controls like W, A. S. D. and camera parameters.

- Unreal Engine is used to render synthetic trajectory, which includes clean frames and camera intrinsics.

A profiling step standardizes the heterogeneous corpus after collection. It can filter for resolution and length, separate videos into clips and estimate missing camera parameter using geometry and pose model. The model uses a vision language to score clips for motion, quality and type of view. It then chooses the curated set.

A module for hierarchical text captioning builds three levels of text control:

- We can add narrative captions to the entire trajectory, even if it includes camera motion.

- Scene static captions describe an environment’s layout in static.

- Descriptions of short, densely packed time periods that are focused on local dynamics

It is essential for the long horizon consistency that this separation allows you to separate out static and motion patterns.

Video backbone, architecture and action conditioning

LingBot starts with Wan2.2, an image-to-video diffusion transformer that uses 14B parameters. The backbone captures open domain videos priors. Robbyant Team extends this into a mix of DiT experts, with 2 expert. The total number of parameters is 28, but each expert only has 14B. The inference costs are kept similar to those of a dense model with 14B parameters while increasing capacity.

Using a curriculum, training sequences can be extended from 5 seconds up to 60. This schedule will increase the percentage of timesteps with high levels of noise, stabilizing global layouts in long-term contexts. It also reduces mode collapsing for large rollouts.

Actions are directly injected into transformer blocks to make them interactive. Camera rotations are encoded with Plücker embeddings. Multi-hot vectors for keyboard keys like W, A and D are encoded. These are then fused with the adaptive layer normalization module, modulating hidden states within DiT. The main video backbone is frozen and only the adapter layer fine-tuned. This allows the model to retain its visual quality while gaining action responsiveness using a smaller dataset.

The training uses image to video as well as video to video task continuation. A single image can be used to create future frames. The model can be extended by a single clip. The internal transition can be started at any time point.

LingBot World Fast: distillation in real-time use

LingBot World Base is a mid-trained version that still uses full attention and multi-step diffusion, both of which can be expensive in real-time interaction. Robbyant introduces LingBot-World-Fast, an accelerated version.

Initialized by the expert in high noise, this model replaces temporal attention completely with causal block attention. Attention is bidirectional within each block of time. The causality is across the blocks. The model is able to stream auto-regressively using this design because it supports caching of key values.

A diffusion forcing technique is employed in the distillation process. It is important that the student be trained to see both clean and noisy latents. The Distribution Matching Distillation head is used in conjunction with an adversarial discriminator. Only the discriminator is updated by an adversarial lose. With the distillation losses, updates are made to the student network. This stabilizes and maintains action following while maintaining temporal coherence.

LingBot World Fast reached 16 frames/second in experiments when processing videos at 480p on 1 GPU. The interaction time was also kept under 1 second.

The emerging memory and the long-horizon behavior

LingBot-World has an emergent memory, which is one of its most fascinating features. The model retains its global consistency, even when it is not explicitly represented in 3D using Gaussian splatting. The structure is consistent when the camera returns to a Stonehenge landmark after 60 seconds. If a vehicle leaves the image and then returns, its position is physically plausible, rather than frozen or reset.

This model is also capable of supporting ultra-long sequences. [The research team has shown coherent video production that can last up to 10 minute with a stable narrative and layout.]

Results of the VBench model and their comparison with other models

To perform a quantitative analysis, the team of researchers used VBench to evaluate 100 video clips, all longer than thirty seconds. LingBot World is compared with 2 world models that are recent, Yume 1.5 and HY World-1.5.

LingBot World has a report on VBench:

The scores for dynamic degree, aesthetics and imaging quality are all higher than the baseline scores. This margin of dynamic degree, which is 0.8857, versus 0.7612 or 0.7217 indicates richer scenes transitions, and motions that are more complex and responsive to user inputs. Motion smoothness, temporal flicker and overall consistency are all comparable with the best baseline.

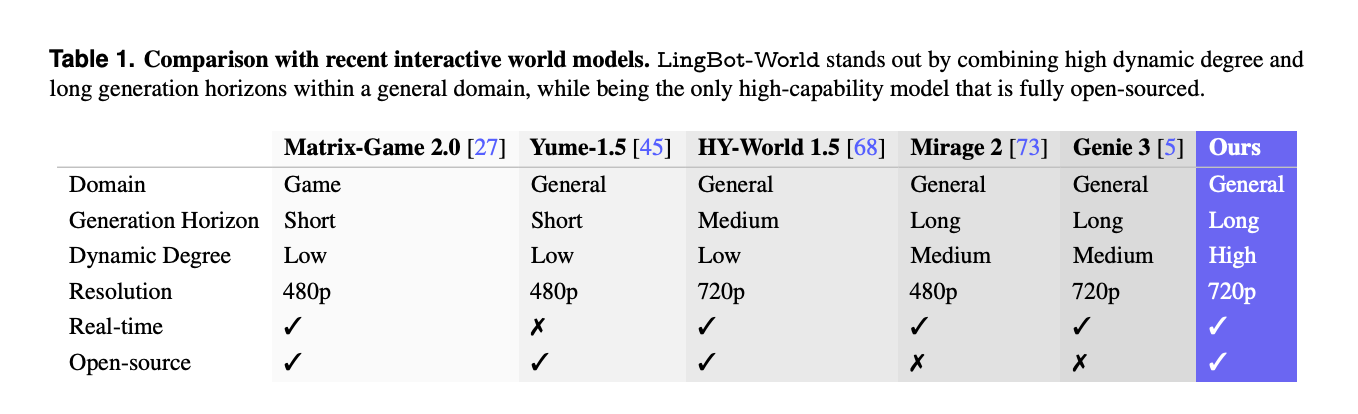

LingBot-World stands out as one of only a few world models with a combination of general domain coverage and 720p HD resolution, high dynamic level, and real-time capability.

Application, promptable universes, agents, and 3D Reconstruction

LingBot-World, beyond video synthesis is designed to be a testbed of embodied AI. This model allows promptable events in the world, such as changing weather conditions, styles, or lighting. Text instructions can also be used to inject local events like fireworks, or animals moving over time.

The system can be used to train agents for downstream actions, such as Qwen3VL-2B which predicts control policies based on images. The generated video streams can be input into 3D reconstruction pipelines that produce stable point cloud for indoor, synthetic and outdoor scenes.

The Key Takeaways

- LingBot is a world action model, which extends text-to-video into text simulation. Keyboard actions and camera movements directly control the long horizon video rolls up to about 10 minutes.

- This system uses a data engine with a single database that includes web videos, Unreal Engine trajectory trajectories and game logs, as well as layered narratives, static scenes and temporal captions.

- Core backbone is 28B parameters of the experts diffusion transformer built with Wan2.2. There are 2 experts each of 14B and two action adapters. The visual backbone stays frozen.

- LingBot World-Fast, a distillation of the original LingBot, uses diffusion force, block causal attention and matching distribution to reach 16 frames per seconds at 480p using 1 GPU. The end-to-end latency is reported to be under one second.

- LingBot-World has reported the highest image quality, aesthetics and dynamic level among Yume-1.5 & HY-World-1.5 on VBench. It also shows the most stable and long-ranged structure, suitable for embodied robots and 3D reconstructing.

Take a look at the Paper, Repo, Project page The following are some examples of how to get started: Model Weights. Also, feel free to follow us on Twitter Don’t forget about our 100k+ ML SubReddit Subscribe now our Newsletter. Wait! Are you using Telegram? now you can join us on telegram as well.