Recent advances in LLMs have led to a rapid increase in the popularity of Deep Research (DR). Most popular public DR Agents aren’t designed to support human writing and thinking processes. They lack the structured steps to support researchers such as writing, searching and using feedback. Current DR Agents compile test time algorithms and different tools without coherent frameworks. It highlights the importance of purpose-built frameworks which can rival or surpass human research capabilities. Current methods lack cognitive processes inspired by humans, which creates a gap in how AI agents perform complex tasks.

To generate research proposals, existing works such as the test-time scale use iterative refinement algorithm, debate mechanisms and tournaments to rank hypotheses. They also utilize self-critique system. To produce detailed answers, multi-agent system planners, coordinaters, researchers and reporters are employed. While some frameworks allow human co-pilot mode for feedback integration, others use coordinators, analysts, researchers, or reporters. The agent tuning approach focuses on multitasking learning objectives, component level supervised fine-tuning and reinforcement learning for improved search and browsing abilities. LLM diffusion model attempts to challenge autoregressive sample assumptions. They generate complete noisy drafts before iteratively denoising tokens.

Google’s researchers introduced Test-Time Diffusion Deep Researcher. This tool was inspired by iterative human research, involving repeated cycles of thinking, searching and refining. This concept conceptualizes report creation as a diffusion, beginning with an outline that is updated and evolves to help guide the research. The draft is refined through iterative processes. “denoising” A dynamic retrieval system that integrates external data at every step informs the process. The draft-centric approach makes it easier to write reports in a timely manner, while also reducing the amount of information lost during search process. The TTD-DR delivers state-of the-art performance on benchmarks requiring intensive searching and multiple-hop reasoning.

TTD-DR is a framework that addresses existing DR agents which use parallelized or linear processes. The proposed backbone DR agents contains three major phases: Research Plan Generation (research plan generation), Iterative search and Synthesis (iterative search and synthesis) and Final Report Generation (final report generation). Each stage includes unit LLM agents and workflows. Self-evolving algorithm is used to improve the performance of every stage. This helps it find and maintain high-quality contextual information. Inspired by self-evolving algorithms, the proposed algorithm can be used in parallel workflows, sequential workflows, and loop workflows. This algorithm is applicable to all stages of agents in order to enhance the overall quality.

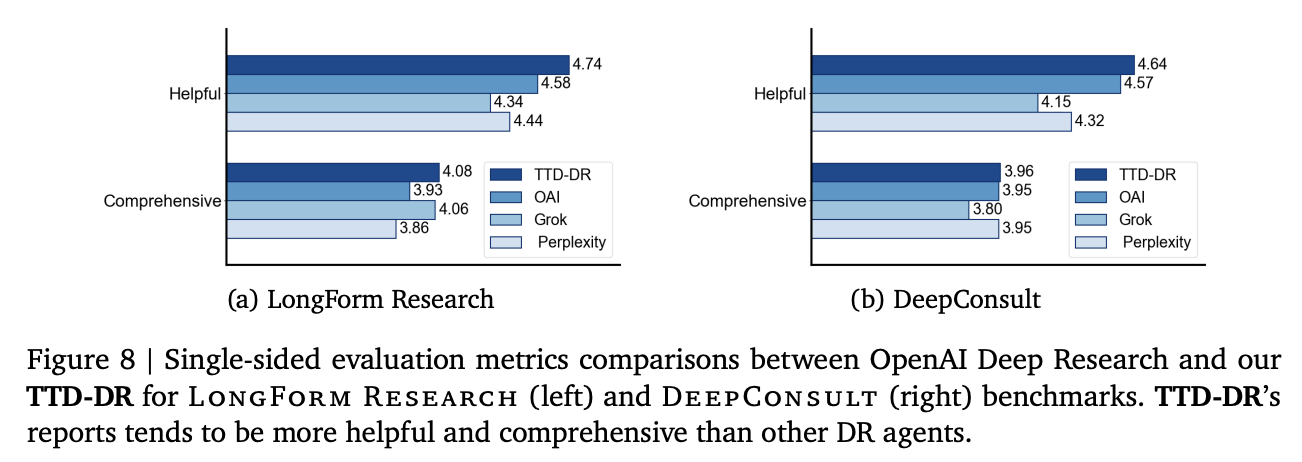

TTD-DR has a win rate of 69.1% compared to OpenAI Deep Research for tasks that require long-form reports. However, it outperforms OpenAI Deep Research on short-form questions with ground-truth by 4.8, 7.7, and 1.7%. The auto-rater score for Helpfulness and Comprehensiveness is high, particularly on LongForm Research datasets. The self-evolution algorithms achieves win rates of 60.9% and 59% against OpenAI Deep Research on LongForm Research, and DeepConsult. On HLE datasets the correctness score has improved by 1.5%, 2.8%. However, GAIA’s performance is still 4.4% lower than OpenAI DR. Diffusion with Retrieval is a powerful tool that can outperform OpenAI Deep Research on all benchmarks.

Google concludes by presenting TTD-DR as a method to address fundamental limitations via human-inspired cognitive design. The approach of the framework views research report creation as a diffusion procedure, with an updateable draft that helps guide research. TTD-DR is enhanced with self-evolving algorithms that are applied to every workflow component. This ensures high quality context generation during the entire research process. TTD’s performance is superior in benchmarks requiring intensive searches and multi-hop logic.

Click here to find out more Paper here. You are welcome to use any of the following. check our Tutorials page on AI Agent and Agentic AI for various applications. Also, feel free to follow us on Twitter Don’t forget about our 100k+ ML SubReddit Subscribe Now our Newsletter.