Researchers from University of Liverpool and Huawei Noah’s Ark Lab present a report on Deep Research Agents, a new paradigm for autonomous research. The systems use Large Language Models to perform complex tasks with long-term horizons that demand dynamic reasoning, adaptive planing, iterative tools, and structured analytic outputs. In contrast to traditional retrieval-augmented generation (RAGDR Agents are able to navigate changing user intentions and ambiguous landscapes of information by integrating browser-based retrieval and structured APIs.

Existing Research Frameworks: Limitations

Deep Research Agents are a new generation of LLM agents that focus on logical reasoning and factual retrieval. While RAG improved the factual basis, tools such as FLARE and Toolformer allowed for basic tool usage. But these models were lacking in real-time flexibility, reasoning depth, and extensibility. They struggled with long-context coherence, efficient multi-turn retrieval, and dynamic workflow adjustment—key requirements for real-world research.

Architectural Innovations in Deep Research Agents

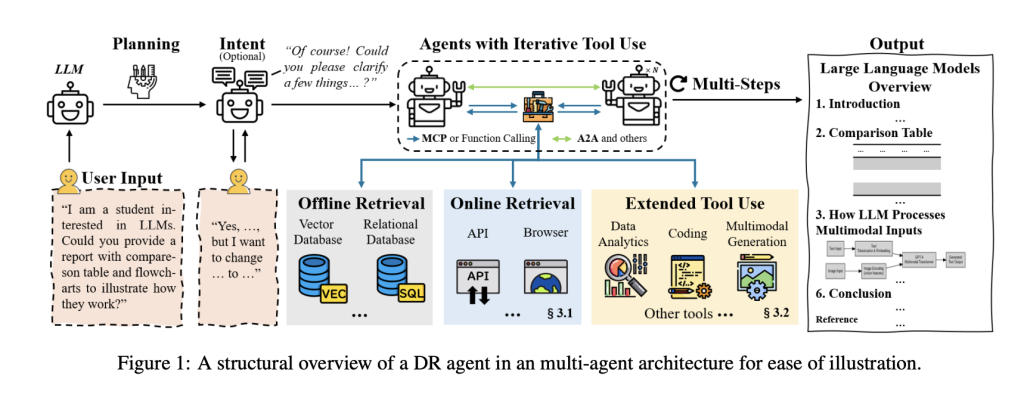

Deep Research Agents, or DR Agents, are designed to overcome the shortcomings of static reasoning systems. Technical contributions include:

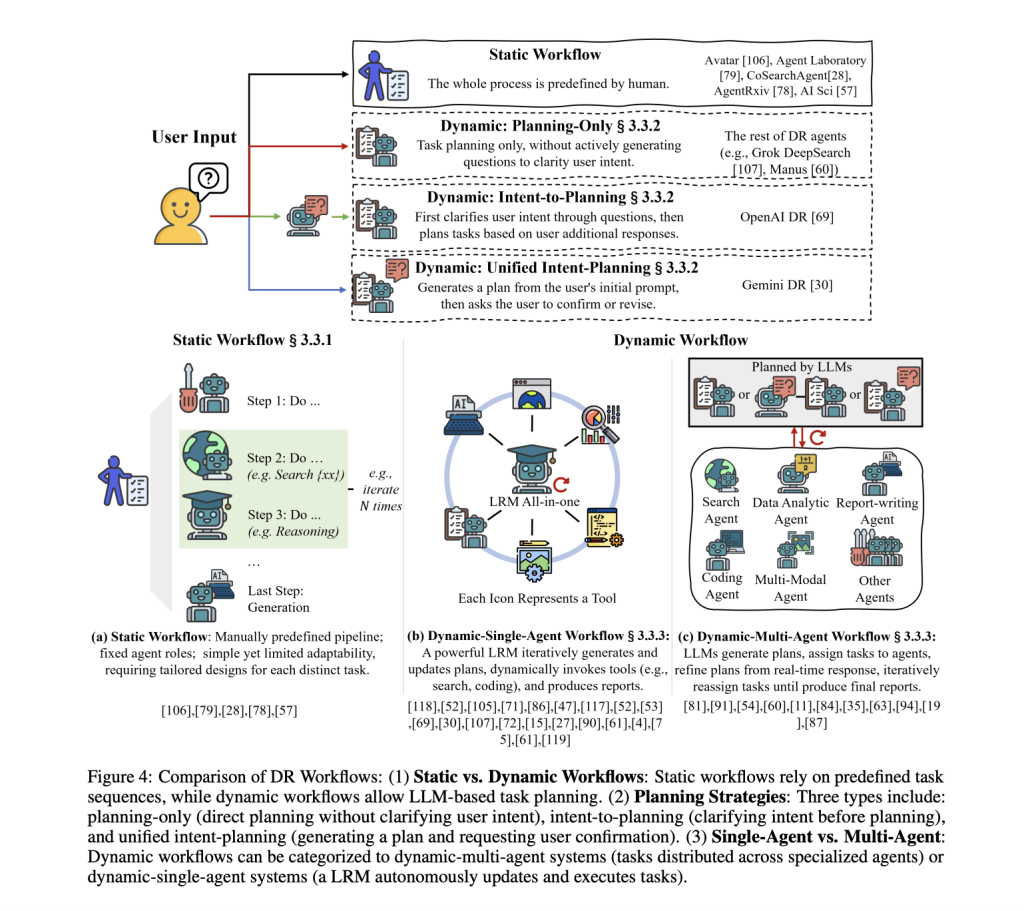

- Workflow classificationWorkflows for research: Differences between static (manual) workflows and dynamic ones (adaptive in real time).

- Model Context Protocol (MCP)Standardized interfaces that enable secure, consistent interactivity with tools external to the system and their APIs.

- Protocole agent-à-agentFacilitates structured, decentralized communication between agents to execute tasks collaboratively.

- Hybrid Search MethodsThe data can be acquired via API (structured), or browser (unstructured).

- Multi-Modal Tool UsageIntegration of data analysis, multimodal production, memory optimization, and code execution within the loop.

The System Pipeline: From Queries to Reports

Deep Research Agents typically process a search query by:

- Understanding intent via intent-to planning, planning only, or unifying intent-planning strategies

- Retrieve dynamic content using APIs and browser environments (e.g. arXiv.com, Wikipedia, Google Search).

- MCP Tool Invocation for Execution Tasks like Scripting, Analytics, or Media Processing

- Reporting that is structured, with tables or visuals, and includes evidence-based summaries.

Memory mechanisms, such as knowledge graphs, vector databases or structured repositories, enable agents to reduce redundancy and manage reasoning in long contexts.

Compare with traditional tool-use agents and RAG

Deep Research Agents operate differently than RAG, which uses static retrieval pipes.

- Plan in multiple steps with changing task goals

- The retrieval strategy can be adapted based on the task progress

- Coordination between multiple agents with specialized skills (multi-agent setting)

- Use asynchronous workflows

This architecture supports more coherent, flexible and scalable execution of research tasks.

Industry Implementations of DR Agents

- OpenAI DRUses a o3 reasoning engine with dynamic workflows based on RL, multimodal retrieval and report generation enabled by code.

- Gemini DRBuilt with Gemini-2.0 Flash. Large context windows are supported, as well as asynchronous workflows and multi-modal tasks management.

- Grok DeepSearchCombining sparse focus, browser retrieval and an execution environment sandboxed.

- Perplexity (DR)Applies hybrid LLM orchestration with iterative Web search.

- Microsoft Researcher & AnalystMicrosoft 365 can be used to integrate OpenAI models for secure, domain-specific research pipelines.

Benchmarking Performance

Deep Research Agents are evaluated using a combination of QA benchmarks and tasks-execution standards:

- Q: HotpotQA, GPQA, 2WikiMultihopQA, TriviaQA

- Complex Research: MLE-Bench, BrowseComp, GAIA, HLE

Benchmarks are used to measure retrieval depth and the consistency of reasoning, as well as structured reporting. DeepResearcher, SimpleDeepSearcher and other agents consistently perform better than traditional systems.

FAQs

Deep Research Agents – What is it?

A: DR Agents are LLM-based system that automatically conducts multi-step research workflows by using dynamic planning.

Q2: What makes DR models better than RAG?

Agents of DR support multi-hop retrieval and iterative tools, as well as real-time reports synthesis.

What protocol do DR agents follow?

The MCP acronym (for Tool Interaction) and the A2A acronym (for Agent Collaboration).

What is the production readiness of these systems?

A: Yes. OpenAI and other companies, including Google, Microsoft and Microsoft, have implemented DR agents into public-facing and enterprise applications.

Q5: What is the evaluation process for DR agents?

A: Use QA Benchmarks, such as HotpotQA or HLE. Also use execution benchmarks, like BrowseComp and MLE-Bench.

Take a look at the Paper. The researchers are the sole credit holders for this work.

Sponsorship Opportunity: You can reach the influential AI developers of US and Europe. Unlimited possibilities. 1M+ monthly subscribers, 500K+ active community builders. [Explore Sponsorship]

Nikhil has been an intern consultant with Marktechpost. He has a dual integrated degree in Materials from the Indian Institute of Technology Kharagpur. Nikhil has a passion for AI/ML and is continually researching its applications to fields such as biomaterials, biomedical sciences, etc. Material Science is his background. His passion for exploring and contributing new advances comes from this.